"ANNIE": A Local Reasoning Assistant Interface

Published on:

Annie is a fully-local reasoning assistant that runs

multiple large-language models on your own hardware.

Her router picks magistral:24b for heavy logic or

qwen3:14b for lighter chat, so every prompt is answered by

the best tool available. We use a lightweight router "llama3" to be able to automatically

choose between models in an "auto" function built in, to better meet the prompt needs.

Beyond text, Annie sees, speaks and listens.

with A AI powered VisionEngine using "LLAVA" and "TESSERACT" Ocr

lets her caption screenshots. She also veiw files with built in python libraries,

diagrams or error logs you drop into the chat are seen like any other Interface

for models like Open-Ais Gpt UI, while a built-in

VoiceEngine handles both TTS and wake-word dictation.

The Tkinter UI streams replies token-by-token, complete with syntax-

highlighted code blocks and an expandable “Reasoning” pane,

that allows you to see Annies real time Thought to proccess the prompt.

Under the hood Annie maintains long-term memory with a embedding model, and a FAISS powered vector system. Fuzzy-corrects slang, indexes your project code for inline retrieval and—only when needed— fetches live results through Google or DuckDuckGo. This workflow lets her cite your own source files, weave recent web context, and respond in natural language or executable code without ever leaving your machine.

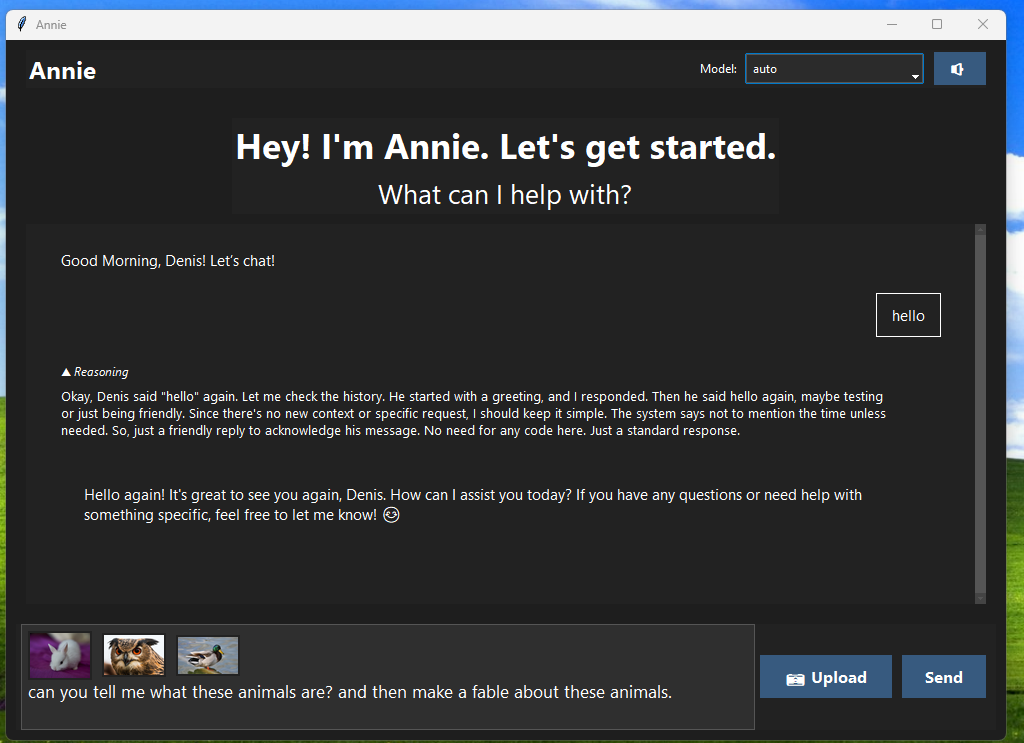

Inside Annie — Screenshots

What the UX looks like for ANNIE GUI

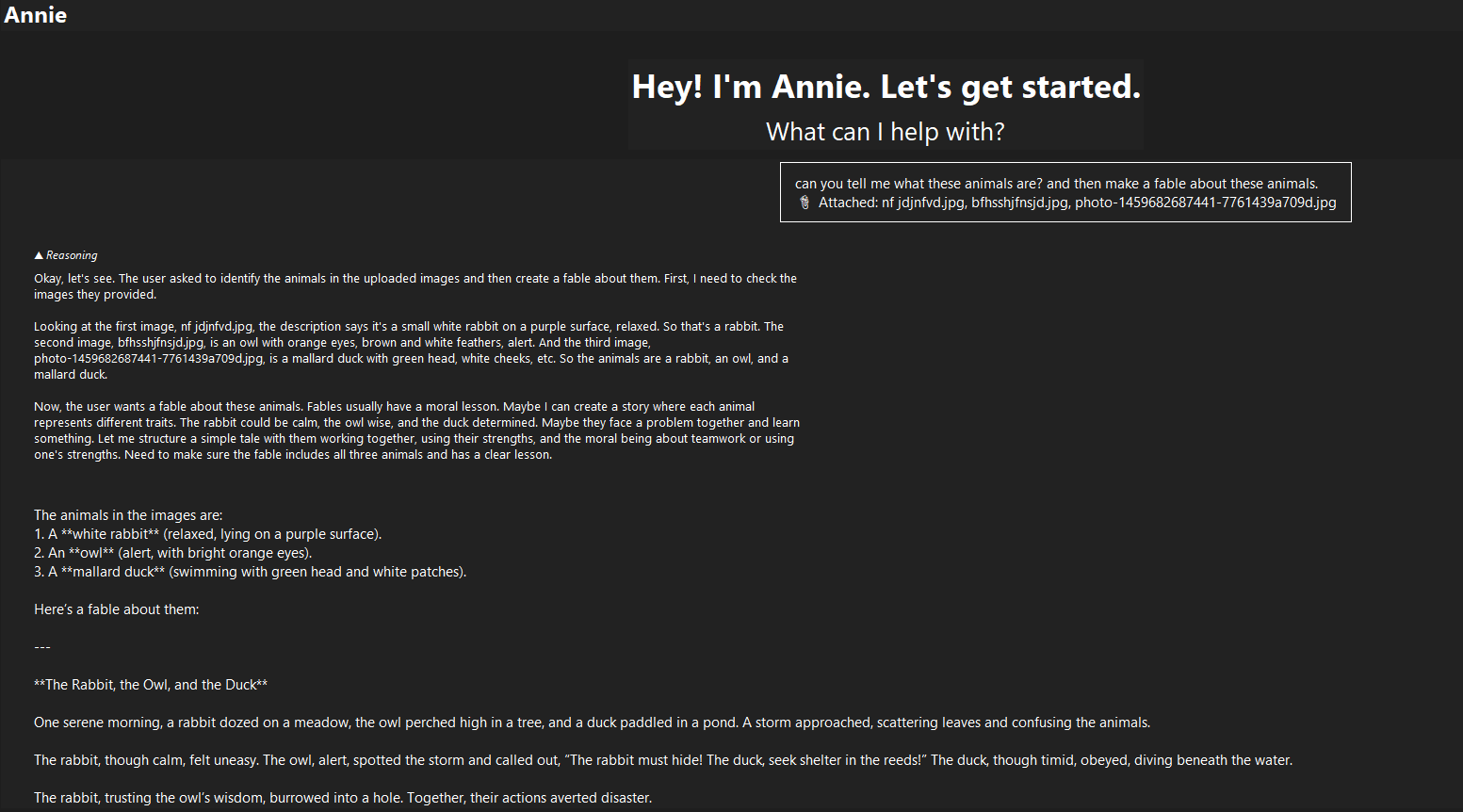

VisionEngine summarising an uploaded screenshot, Followed by a Response to the Prompt with Dropdown Reasoning